Are you wondering what ChatGPT 4.1 brings to the table? With artificial intelligence evolving at breakneck speed, OpenAI has once again pushed the boundaries with their latest model family. Whether you're a developer looking to build better applications, a business owner seeking AI solutions, or simply curious about the latest AI capabilities, understanding ChatGPT 4.1 is essential.

This comprehensive guide covers everything you need to know about OpenAI's newest AI model, from its groundbreaking features to practical applications. We'll explore how it stacks up against previous versions and help you decide if it's the right choice for your needs.

What Is ChatGPT 4.1?

When OpenAI announced GPT-4.1 on April 14, 2025, it marked a pivot from the traditional upgrade cycle. Unlike previous releases that chased general-purpose reasoning, GPT-4.1 was built directly from developer feedback. The result: a model that outperforms GPT-4o across the board, with major gains in coding, instruction following, and long-context understanding.

By May 14, 2025, GPT-4.1 expanded beyond the API into ChatGPT itself, and GPT-4.1 mini now replaces GPT-4o mini and is available for all ChatGPT users, including free-tier customers. This democratization matters: developers can now test production code against the best model available, regardless of subscription level.

What makes GPT-4.1 distinctly different isn't just power—it's efficiency. The flagship model operates approximately 40% faster than its predecessors while reducing operational costs by up to 80% in certain scenarios. For teams running high-volume API calls, this changes economics entirely.

GPT-4.1 Core Improvements

Enhanced Coding Performance for Real-World Tasks

GPT-4.1's standout strength is software engineering. On SWE-bench Verified—a benchmark that tests whether models can read code repositories, identify issues, and produce patches that compile and pass tests—GPT-4.1 completes 54.6% of tasks, compared to 33.2% for GPT-4o. This 21-point jump reflects a genuine shift in capability.

The practical improvements matter more than benchmarks:

-

Code diff handling: GPT-4.1 nearly doubles GPT-4o's performance on Aider's polyglot diff benchmark, reaching 52.9% accuracy on code diffs across multiple languages and formats. For developers using GitHub, this means fewer extraneous edits and faster code reviews.

-

Extraneous edits: In internal evaluations, unnecessary code changes dropped from 9% (GPT-4o) to 2%.

-

Frontend development: OpenAI's demo showed human raters preferring GPT-4.1's web app output 80% of the time over GPT-4o.

One key reason: GPT-4.1 follows instructions literally. Unlike GPT-4o, which might infer intent and add unnecessary improvements, GPT-4.1 does exactly what you specify—a massive advantage in controlled development workflows.

Superior Instruction Following Across All Domains

GPT-4.1 scored 49.1% on OpenAI's internal instruction-following benchmark (hard subset), nearly double GPT-4o's 29.2%. This precision extends beyond code:

-

Legal documents: CoCounsel reported 53% greater accuracy on complex tax cases requiring careful reasoning over lengthy legislative text.

-

Data analysis: Teams working with ambiguous database schemas saw 2x improvement in SQL query generation.

-

Document review: In multi-document analysis, accuracy improved 17%, with better understanding of contradictory clauses and cross-references.

For businesses automating workflows, this reliability replaces extensive prompt engineering. Tasks that previously needed three attempts and expert refinement now succeed on the first try.

The Game-Changer: 1 Million Token Context Window

All three GPT-4.1 variants support 1 million tokens of context—roughly 750,000 words. This is 8 times the size of GPT-4o's maximum capacity of 128K tokens, and available without any additional cost.

To visualize the scale: 1 million tokens equals more than 8 complete copies of the entire React codebase, or a 600-page legal contract plus related case law in a single prompt. This enables:

-

Full codebase analysis: Load an entire project and ask the model to identify architectural issues or refactor patterns.

-

Long-document retrieval: Process research papers, regulatory documents, or contract libraries without chunking.

-

Agentic workflows: Systems can maintain complete conversation history plus external knowledge bases without token constraints.

However, a caveat: while processing large contexts, models sometimes miss important details ("needle-in-haystack" retrieval remains challenging). GPT-4.1 outperforms GPT-4o at context lengths up to 128K tokens and maintains strong performance even up to 1 million tokens, but the task remains hard—even for advanced reasoning models.

Performance, Speed, and Cost Efficiency

GPT-4.1 doesn't sacrifice quality for speed. Across the latency curve, it delivers faster responses with lower hallucination rates than GPT-4o. The cost advantage is dramatic:

| Model |

Input Cost |

Output Cost |

Latency vs GPT-4o |

Best For |

| GPT-4.1 |

$2/M tokens |

$8/M tokens |

40% faster |

Maximum performance, complex logic |

| GPT-4.1 Mini |

$0.40/M tokens |

$1.60/M tokens |

50% faster |

General-purpose; 83% cheaper than GPT-4o |

| GPT-4.1 Nano |

$0.04/M tokens |

$0.16/M tokens |

Fastest |

Classification, autocompletion, high-throughput |

GPT-4.1 mini matches or exceeds GPT-4o in intelligence evals while reducing latency by nearly half and reducing cost by 83%. For teams still using GPT-4o, switching just the mini variant cuts infrastructure costs significantly without noticeable quality loss.

Multimodal Input (Text-Only Output)

Like GPT-4o, GPT-4.1 accepts image inputs—charts, diagrams, screenshots—for analysis. It performs competently on vision tasks:

-

MMMU benchmark: Answering image-based questions on charts, diagrams, and maps

-

CharXiv: Reading and interpreting charts from academic papers

-

Vision math: Solving visual reasoning problems

However, GPT-4.1 outputs text only. It cannot generate images, audio, or video. If you need multimodal generation, GPT-4o remains the choice.

The Three GPT-4.1 Variants Explained

GPT-4.1: The Flagship Model

GPT-4.1 delivers the complete feature set with maximum intelligence. It's ideal for tasks requiring highest accuracy: complex reasoning, large codebases, multi-step workflows, and strict instruction adherence. Available via OpenAI API and ChatGPT Plus/Pro subscriptions, it supports the full 1 million token context window.

Typical use cases: Production coding systems, legal AI applications, enterprise automation, research analysis.

GPT-4.1 Mini: The Balanced Choice

GPT-4.1 Mini cuts latency by half and cost by 83% while maintaining near-flagship performance on most tasks. It matches or exceeds GPT-4o in many benchmarks while reducing latency by nearly half and cost by 83%. It's the default model for free ChatGPT users and increasingly the sensible choice for most developers.

Benchmarks show Mini competes with the flagship on vision tasks and general reasoning. For everyday development—code review, documentation, debugging—the speed and cost savings far outweigh any marginal quality difference.

Typical use cases: Customer-facing applications, content generation, general-purpose chatbots, small business automation.

GPT-4.1 Nano: Ultra-Fast and Cost-Effective

GPT-4.1 Nano is OpenAI's first production-ready ultra-light model. It delivers exceptional performance at a small size with its 1 million token context window, and scores 80.1% on MMLU, 50.3% on GPQA, and 9.8% on Aider polyglot coding—even higher than GPT-4o mini.

Nano isn't a compromise model; it's purpose-built. It excels at classification, autocompletion, real-time moderation, and high-throughput applications where latency matters more than maximum accuracy.

Typical use cases: High-frequency API calls, edge deployment, spam detection, content tagging, real-time chat filtering.

GPT-4.1 vs GPT-4o: The Direct Comparison

| Capability |

GPT-4.1 |

GPT-4o |

Winner |

| Coding (SWE-bench) |

54.60% |

33.20% |

🏆 GPT-4.1 (+21.4%) |

| Instruction Following |

49.10% |

29.20% |

🏆 GPT-4.1 (+19.9%) |

| Code Diff Accuracy |

52.90% |

~26% |

🏆 GPT-4.1 (+100%) |

| Context Window |

1M tokens |

128K tokens |

🏆 GPT-4.1 (8x larger) |

| Speed (latency) |

40% faster |

Baseline |

🏆 GPT-4.1 |

| Cost (Mini tier) |

83% cheaper |

Baseline |

🏆 GPT-4.1 |

| Image Generation |

Text only |

Text only |

Tie |

| Audio Output |

No |

No |

Tie |

| Multimodal Input |

Yes |

Yes |

Tie |

| Knowledge Cutoff |

June 2024 |

October 2023 |

🏆 GPT-4.1 |

The Decision Framework:

Choose GPT-4.1 if you're building production systems that need top-tier coding ability, processing large documents, or automating complex multi-step workflows where instruction precision matters.

Choose GPT-4o only if you require audio or video generation capabilities, or if you're locked into legacy integrations. GPT-4.1 Mini likely outperforms it at lower cost.

How to Access GPT-4.1 and Pricing Plan

Through ChatGPT

Access depends on your subscription:

-

Free users: Automatic access to GPT-4.1 Mini (no model selection needed)

-

Plus users ($20/month): Choice of GPT-4.1 Mini or GPT-4.1 Standard

-

Pro users ($200/month): Unlimited access to all variants

-

Team/Enterprise: Full access with administrative controls

As of May 2025, all versions are live. Free-tier users no longer use GPT-3.5; they automatically benefit from GPT-4.1 Mini's improvements. Learn more informations about Chatgpt Pricing here.

Via OpenAI API (For Developers)

The API provides the most flexibility. Use model identifiers:

-

gpt-4.1 (flagship, $2 input / $8 output per million tokens)

-

gpt-4.1-mini ($0.40 input / $1.60 output per million tokens)

-

gpt-4.1-nano ($0.04 input / $0.16 output per million tokens)

API access supports the full 1 million token context window and increased output limits (32,768 tokens, doubled from GPT-4o's 16,384). This is critical for large file rewrites.

Through Third-Party Platforms

GitHub Copilot, GitHub Models, and development tools now support GPT-4.1 natively. Microsoft's platforms offer access via Azure OpenAI. Platforms like Windsurf and Cursor have integrated GPT-4.1 for seamless IDE experience.

Tips: If you get some troubles about ChatGPT, you can find a solution here: https://gptproto.com/blog/why-is-chat-gpt-not-working

Limitations and Important Caveats

Fine-Tuning: A Notable Gap

GPT-4.1 marketing claims support for fine-tuning, but currently only Mini and Nano accept fine-tune jobs. The flagship remains locked. OpenAI engineers attribute the delay to weight-sharding complexities in the larger network; internal targets point to a Q3 2025 release for full support.

For domain-specific tasks, the workaround is fine-tuning Mini for tone and style, then routing edge cases to the flagship.

Literal Interpretation Requires Careful Prompting

Unlike previous OpenAI models, GPT-4.1 models follow prompts far more literally—changing how you write and structure them. GPT-4.1 won't follow implicit rules anymore. It does exactly what you tell it to do—no more, no less.

This is usually an advantage (fewer hallucinations, more predictable output), but it means your existing prompts may break. Test thoroughly before production migration.

Model Behavior Under Load

While processing near-maximum context (900K+ tokens), performance can degrade slightly on some reasoning tasks. The model also shows fewer "creative leaps," preferring conservative answers when context is vast.

Vision Output Unavailable

GPT-4.1 cannot generate, edit, or manipulate images. If your workflow requires image generation, you'll need DALL-E 3 or a separate image model.

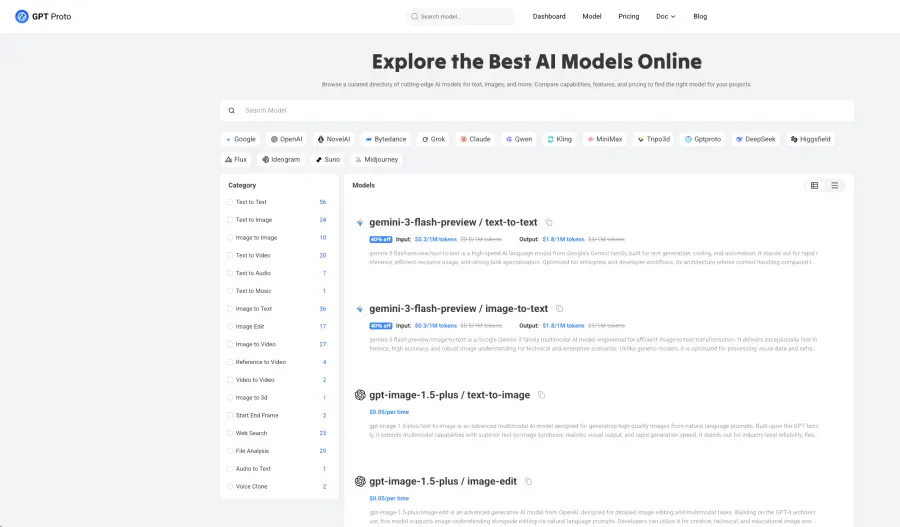

GPT Proto: Your Gateway to ChatGPT 4.1

For developers and teams integrating GPT-4.1 into production systems, API access is critical—but managing multiple vendor connections can complicate workflows. This is where unified AI platforms become valuable.

GPT Proto is an API aggregation platform that consolidates access to multiple leading AI models, including the complete GPT-4.1 family (4.1, 4.1-mini, and 4.1-nano), all from a single integration point. Rather than maintaining separate connections to OpenAI, Anthropic, Google, and other providers, teams can standardize on one well-documented API layer.

The practical advantages for production teams:

-

Reduced integration overhead: One API endpoint instead of managing separate authentication and rate limits across vendors

-

Flexible model switching: Test or swap models (GPT-4.1 to Claude to Gemini) without rewriting application code

-

Improved cost tracking: Centralized billing and usage monitoring across all AI providers

-

Lower latency: Globally distributed endpoints optimize response times for geographically dispersed users

-

Simplified documentation: One API reference instead of memorizing different vendor specifications

For teams already using the OpenAI API directly, GPT Proto isn't a requirement—OpenAI's API is mature and reliable. However, for organizations evaluating multi-model strategies or managing complex API environments, consolidation platforms reduce operational burden significantly.

GPT Proto's primary value proposition targets teams at scale: companies running hundreds of concurrent requests, maintaining multiple applications, or needing fallback options when one provider experiences service degradation. The platform also appeals to agencies and consultants building client solutions where vendor flexibility matters.

If your workflow is simple—single application, single model—OpenAI's API remains the straightforward choice. If your environment is complex, a unified platform can streamline development and reduce maintenance costs over time.

Final Thoughts – Is ChatGPT 4.1 Worth It?

Yes, if you code, analyze documents, or need precise instruction following. GPT-4.1 Mini costs 83% less than GPT-4o while delivering faster responses and measurably better results. The 1 million token context window removes a major constraint for developers working with large codebases.

For production teams, the math is simple: test it (free in ChatGPT), measure the improvement, and migrate where ROI is clear. The coding benchmarks speak for themselves—54.6% on SWE-bench versus 33.2% for GPT-4o isn't a marginal gain.

Start with GPT-4.1 Mini. You'll likely see cost savings immediately and quality improvements within weeks. The only reason not to switch is if you require image generation or are locked into legacy systems. Everything else points toward GPT-4.1.

Conclusion

GPT-4.1 is no longer a luxury upgrade—it's the practical default for teams serious about coding efficiency and cost reduction, delivering 54.6% SWE-bench performance at 83% lower cost than GPT-4o. Whether you integrate through ChatGPT, OpenAI's API, or unified platforms like GPT Proto AI API Provider that consolidate multiple AI providers, the competitive advantage is clear.