Experience the Future of Logic with Grok 4.20 beta 0309 reasoning on GPT Proto

The landscape of artificial intelligence is shifting from simple text generation to profound logical deduction, and the grok 4.20 beta 0309 reasoning model stands at the absolute forefront of this evolution. Developed by xAI and now fully integrated into our high-performance infrastructure, this model offers developers and businesses a level of cognitive depth previously thought impossible in a commercial API. Whether you are building complex legal analysis tools or autonomous coding agents, you can explore the full potential of this model and others by visiting our comprehensive model library today.

Mastering Complex Logic with Grok 4.20 beta 0309 reasoning Advanced Thought Traces

One of the most significant breakthroughs of the grok 4.20 beta 0309 reasoning model is its shift toward a "thinking-first" architecture. Unlike traditional LLMs that predict the next token based on statistical probability alone, this reasoning model employs a sophisticated internal chain-of-thought process before delivering a final answer. On GPT Proto, we provide the low-latency environment necessary to handle these "reasoning traces" efficiently, ensuring that while the model takes its time to think through a problem, the delivery to your end-user remains snappy and reliable. This makes it the ideal choice for tasks where accuracy is non-negotiable and the "hidden" steps of logic are just as important as the final output string.

Architecting Multi-Step Technical Solutions with Precision Reasoning Steps

For developers, the grok 4.20 beta 0309 reasoning API is a game-changer for debugging and system architecture. Because the model is optimized for "fast-reasoning," it can decompose a massive software engineering problem into smaller, logical modules, checking for edge cases and security vulnerabilities during the generation process. When you integrate this model on GPT Proto, you are gaining access to a virtual senior architect that doesn't just write code but explains the "why" behind every function. This reduces the time spent on manual code reviews and significantly increases the reliability of AI-generated software components in production environments.

Streamlining Long-Form Conversations via Stateful API Response Chaining

Standard AI interactions are often "stateless," meaning you have to resend the entire conversation history with every new prompt, leading to ballooning costs and slower processing. The grok 4.20 beta 0309 reasoning model utilizes a revolutionary Responses API that allows for stateful interactions. By storing previous input prompts and model responses on the server side, you can continue a complex dialogue simply by referencing a previous response ID. On GPT Proto, we optimize this chaining process, allowing your applications to maintain deep context over thousands of tokens without the typical overhead, making your chatbots feel more human and significantly more intelligent.

"Grok 4.20 beta 0309 reasoning is not just a language model; it is a logical engine that maintains state and depth, providing a foundation for the next generation of autonomous agents on GPT Proto."

Seamlessly Integrate Grok 4.20 beta 0309 reasoning via the GPT Proto Developer Hub

Transitioning to a reasoning-based model often comes with technical hurdles, but GPT Proto removes these barriers through our unified API gateway. We provide comprehensive SDK support and an OpenAI-compatible REST interface that makes switching to grok 4.20 beta 0309 reasoning as simple as changing a single line of code in your configuration. Our platform ensures high availability and enterprise-grade security for your data, handling the complexities of gRPC and encrypted thinking traces in the background. To get started with your first implementation, please refer to our detailed API documentation and integration guide.

| Feature Comparison | Standard Legacy Models | Grok 4.20 beta 0309 reasoning on GPT Proto |

|---|---|---|

| Logical Depth | Pattern Matching Only | Deep Chain-of-Thought Reasoning |

| Context Handling | Stateless / Manual History | Stateful Responses API Chaining |

| Processing Speed | Variable | Optimized Fast-Reasoning Throughput |

| Cost Efficiency | High Token Overhead | Cached Prompt Savings & Chaining |

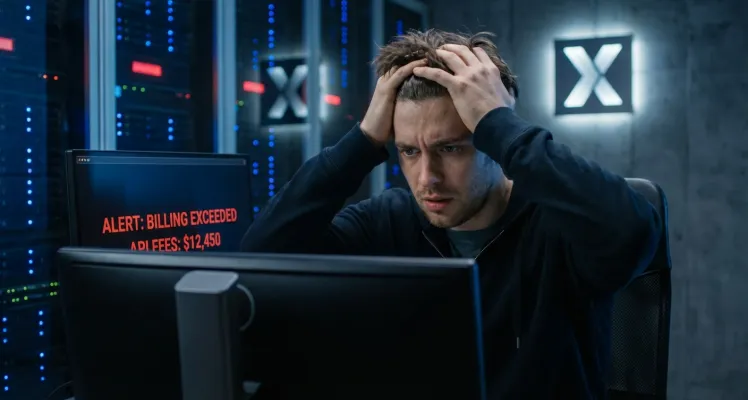

Transparent Usage and Instant Balance Top-ups for Your Grok API Workflows

At GPT Proto, we believe that advanced AI should be accessible through a transparent and fair pricing model. Unlike other platforms that lock you into complex monthly subscriptions or confusing credit systems, we operate on a direct-fund basis. You simply top-up your balance with the exact amount you wish to spend, and those funds are deducted in real-time based on your actual API usage. This "pay-as-you-go" approach ensures that you only pay for the reasoning power you actually consume, making it easier for startups and individual developers to scale their projects without financial surprises.

Monitoring your performance and spend is equally effortless. By visiting your personal usage dashboard, you can see detailed breakdowns of your token consumption, response times, and total spend across all Grok models. This granular data allows you to optimize your prompts and manage your budget with total precision. For more tips on how to maximize the efficiency of reasoning models or to stay updated on the latest releases from xAI and other vendors, be sure to follow our official GPT Proto blog for expert insights and tutorials.