Grok 3 Mini API: High-Speed Intelligence for Efficient Workflows

Developers looking for a balance between speed and utility can now explore all available AI models including the latest Grok 3 Mini release. This model targets specific operational niches where larger, more expensive engines might be overkill.

Grok 3 Mini Performance Metrics for Production APIs

Grok 3 Mini represents a shift toward specialized, smaller-scale intelligence. Early testers highlight its capability in handling menial tasks and straightforward coding assistance. Unlike some competitors that feel restricted by heavy-handed alignment, Grok Mini delivers a more direct output style. Users often describe the experience as having 'no sunshine and rainbows,' meaning the responses stay grounded in the technical requirements of the prompt rather than unnecessary conversational fluff.

When deploying Grok 3 in a production environment, latency becomes the primary advantage. The architecture is trimmed to ensure rapid inference, making it ideal for real-time applications like chatbots or automated support systems. While it might struggle with deep logical reasoning compared to its larger siblings, Grok 3 Mini excels in high-throughput scenarios where response time is the critical KPI.

Grok 3 Pricing Structure and Token Management

Cost management is a central topic for anyone using the Grok Mini api. The current market rate for Grok 3 Mini sits at approximately $0.30 per million input tokens, positioning it as a middle-tier option in terms of affordability. This allows teams to scale their operations without the massive overhead associated with frontier models.

"Grok 3 Mini provides a raw performance profile that developers appreciate for scripting and data cleaning, though the moderation cost structure requires careful prompt engineering." — Senior DevOps Engineer.

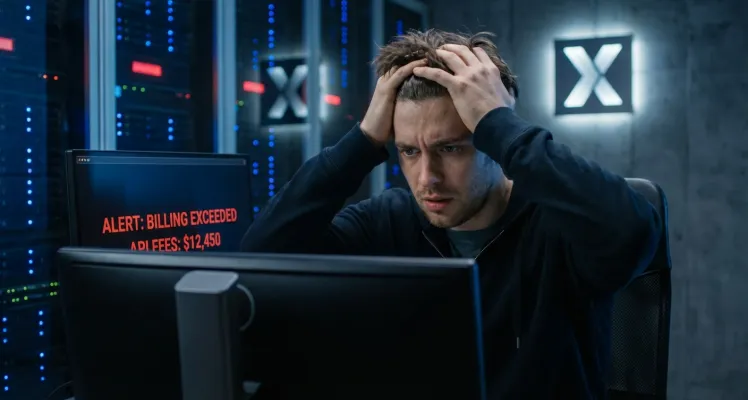

However, one unique aspect of the Grok pricing model is the content moderation penalty. Rejections triggered by content safety filters can incur a flat fee of $0.05 per instance. This makes it vital for developers to read the full API documentation to understand how to structure prompts that comply with the safety layers while still getting the desired technical output.

Grok Mini vs Competitor Models

In the current AI ecosystem, Grok 3 Mini is frequently compared to models like Gemini 1.5 Flash or various Codex variants. While Gemini might offer broader multimodal capabilities, Grok 3 focuses heavily on text-based efficiency. The following table illustrates how these models stack up on the GPTProto platform:

| Model Identifier | Input Price (per 1M) | Best Use Case | Latency Tier |

|---|---|---|---|

| Grok 3 Mini | $0.30 | Coding & Menial Tasks | Ultra-Low |

| Grok 4 Fast | $0.20 | General Chat | Low |

| Gemini 1.5 Flash | $0.075 | Multimodal Logic | Very Low |

| GPT-4o Mini | $0.15 | Reasoning | Low |

Optimizing Grok Mini API Usage

Getting the most value out of Grok 3 requires a focused approach to prompt design. Since the model is optimized for speed, providing clear, concise instructions prevents the 'randomness' some users report during extended testing. Utilizing the API usage dashboard helps developers monitor their consumption in real-time, ensuring that token spend remains within budget while maximizing output quality.

For those building creative tools or intelligent agents, you can try GPTProto intelligent AI agents that leverage Grok 3 Mini for backend logic. This integration allows for stable, high-speed execution of agentic workflows without the complexity of managing individual model connections. If you encounter any stability issues, the GPTProto tech stack ensures your Grok Mini api calls are routed through reliable infrastructure, minimizing downtime during peak usage periods.

Future Outlook for Grok 3 Intelligence

As the Grok 3 family continues to evolve, the Mini variant serves as a critical entry point for developers who need 'just enough' intelligence at a predictable price. Stay updated on the latest AI industry updates to see how future patches impact the Grok 3 Mini coding benchmarks. For now, it remains a strong choice for those who value speed and a less restricted persona over the heavy-duty reasoning of larger LLMs.